The FGCB_ADD_REMOVE Latch

In my previous article I kicked off a new series on latches by explain what they are, why they’re needed, and the mechanics of how they work, and I strongly recommend that you read that article before this one. In this article I’m going to discuss the FGCB_ADD_REMOVE latch and show how it can be a bottleneck.

What is the FGCB_ADD_REMOVE Latch?

Most latch class names are tied directly to the data structure that they protect. The FGCB_ADD_REMOVE latch protects a data structure called an FGCB, or File Group Control Block, and there will be one of these latches for each online filegroup of each online database in a SQL Server instance. Whenever a file in a filegroup is added, dropped, grown, or shrunk, the latch must be acquired in EX mode, and when figuring out the next file to allocate from, the latch must be acquired in SH mode to prevent any filegroup changes. (Remember that extent allocations for a filegroup are performed on a round-robin basis through the files in the filegroup, and also take into account proportional fill, which I explain here.)

How Does the Latch Become a Bottleneck?

The most common scenario when this latch becomes a bottleneck is as follows:

- There’s a single-file database, so all the allocations must come from that one data file

- The autogrow setting for the file is set to be very small (remember, before SQL Server 2016, the default autogrow setting for data files was 1MB!)

- There are many concurrent operations requiring space to be allocated (e.g. a constant insert workload from many client connections)

In this case, even though there’s only one file, a thread requiring an allocation still has to acquire the FGCB_ADD_REMOVE latch in SH mode. It will then try to allocate from the single data file, realize there’s no space, and then acquire the latch in EX mode so it can then grow the file.

Let’s imagine that eight threads running on eight separate schedulers all try to allocate at the same time, and all realize there’s no space in the file so they need to grow it. They will each attempt to acquire the latch in EX mode. Only one of them will be able to acquire it and it will proceed to grow the file and the others will have to wait, with a wait type of LATCH_EX and a resource description of FGCB_ADD_REMOVE plus the memory address of the latch.

The seven waiting threads are in the latch’s first-in-first-out (FIFO) waiting queue. When the thread performing the file growth is done, it releases the latch and grants it to the first waiting thread. This new owner of the latch goes to grow the file and discovers that it’s already been grown and there’s nothing to do. So it releases the latch and grants it to the next waiting thread. And so on.

The seven waiting threads all waited for the latch in EX mode but ended up doing nothing once they were granted the latch, so all seven threads essentially wasted elapsed time, with the amount of time being wasted increasing a little bit for each thread the further down the FIFO waiting queue it was.

Showing the Bottleneck

Now I’m going to show you the exact scenario above, using extended events. I’ve created a single-file database with a tiny autogrow setting, and hundreds of concurrent connections simply inserting data into a table.

I can use the following extended event session to see what’s going on:

-- Drop the session if it exists.

IF EXISTS

(

SELECT * FROM sys.server_event_sessions

WHERE [name] = N'FGCB_ADDREMOVE'

)

BEGIN

DROP EVENT SESSION [FGCB_ADDREMOVE] ON SERVER;

END

GO

CREATE EVENT SESSION [FGCB_ADDREMOVE] ON SERVER

ADD EVENT [sqlserver].[database_file_size_change]

(WHERE [file_type] = 0), -- data files only

ADD EVENT [sqlserver].[latch_suspend_begin]

(WHERE [class] = 48 AND [mode] = 4), -- EX mode

ADD EVENT [sqlserver].[latch_suspend_end]

(WHERE [class] = 48 AND [mode] = 4) -- EX mode

ADD TARGET [package0].[ring_buffer]

WITH (TRACK_CAUSALITY = ON);

GO

-- Start the event session

ALTER EVENT SESSION [FGCB_ADDREMOVE]

ON SERVER STATE = START;

GO

The session is tracking when a thread enters the latch’s waiting queue, when it leaves the queue (i.e. when it is granted the latch), and when a data file growth occurs. Using causality tracking means we can see a timeline of the actions by each thread.

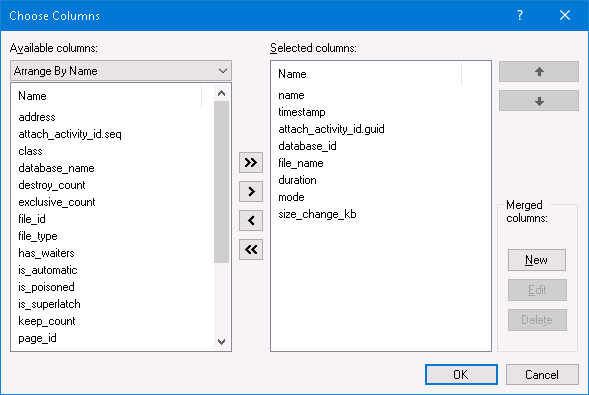

Using SQL Server Management Studio, I can select the Watch Live Data option for the extended event session and see all the extended event activity. If you want to do the same, in the Live Data window, right click on one of the column names at the top and change the selected columns to be as below:

I let the workload run for a few minutes to reach a steady state and then saw a perfect example of the scenario I described above:

Using the attach_activity_id.guid values to identify different threads, we can see that seven threads start waiting for the latch within 61.5 microseconds. The thread with the GUID value starting 8D57 acquires the latch in EX mode (the latch_suspend_end event) and then immediately grows the file (the database_file_size_change event). The 8D57 thread then releases the latch and grants it in EX mode to the 6F82 thread, which waited for 85 milliseconds. It has nothing to do so it grants the latch to the 672B thread. And so on, until the EDB8 thread is granted the latch, after waiting for 202 milliseconds.

In total, the six threads that waited for no reason waited for almost 1 second. Some of that time is signal wait time, where even though the thread has been granted the latch, it still needs to move up to the top of the scheduler’s runnable queue before it can get onto the processor and execute code. You might say that this isn’t a fair measure of time spent waiting for the latch, but it absolutely is, because the signal wait time wouldn’t have been incurred if the thread hadn’t had to wait in the first place.

Furthermore, you might think that a 200 millisecond delay isn’t that much, but it all depends on the performance service-level agreements for the workload in question. We have multiple high-volume clients where if a batch takes more than 200 milliseconds to execute, it’s not permitted on the production system!

Summary

If you’re monitoring waits on your server and you notice LATCH_EX is one of the top waits, you can use the code in this post so see if FGCB_ADD_REMOVE is one of the culprits.

The easiest way to make sure that your workload isn’t hitting an FGCB_ADD_REMOVE bottleneck is to make sure that there are no data file autogrow settings that are configured using the pre-SQL Server 2016 defaults. In the sys.master_files view, the 1MB default would show as a data file (type_desc column set to ROWS) with the is_percent_growth column set to 0, and the growth column set to 128.

Giving a recommendation for what autogrow should be set to be is a whole other discussion, but now you know of a potential performance impact from not changing the defaults in earlier versions.

Paul,

im curious if you know anything about the internals of the Synapse Polaris distributed engine that you could blog about

Nothing at all I'm afraid.

I just started to see this wait in Azure SQL DB today.