Security Features in SQL Server 2017

Microsoft has a number of security features in SQL Server 2017 that are useful for different purposes, depending on what you are trying to protect and what threat(s) you are trying to protect against. Some of these security features can have performance implications that you should be aware of as you decide which ones you want to implement. As an introduction, I’ll cover some the highlights of several of these security features.

Transparent Database Encryption (TDE)

Transparent Data Encryption (TDE) is SQL Server’s form of encryption at rest, which means that your data files, log file, tempdb files, and your SQL Server full, differential, and log backups will be encrypted when you enable TDE on a user database. This protects your data from someone getting access to those database or database backup files as long as that person doesn’t also have access to your encryption certificates and keys.

The initial TDE encryption scan for a user database will use one background CPU thread per data file in the database (if the data files are located on separate logical drives), to slowly read the entire contents of the data file into memory at the rate of about 52MB/second per data file (if the data files are located on separate logical drives).

The data is then encrypted with your chosen encryption algorithm, and then written back out to the data file at about 55MB/per second (per data file, if they are on separate logical drives). These sequential read and write rates appear to be purposely throttled and are consistent in my testing on multiple systems with various types of storage.

The initial TDE encryption process happens at the page level, underneath SQL Server, so it does not cause locking or generate transaction log activity like you would see with rebuilding an index. You can pause a TDE encryption scan by enabling global TF 5004, and un-pause it by disabling TF 5004 and running your ALTER DATABASE dbNAME SET ENCRYTION ON command again, with no loss of progress.

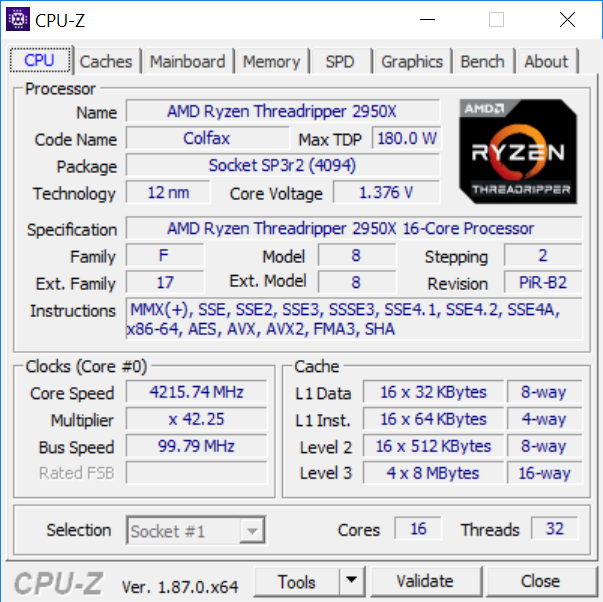

The CPU impact of encryption/decryption is greatly reduced on SQL Server 2016 and newer if you have a processor that supports AES-NI hardware instructions. In the server space, these were introduced in the Intel Xeon 5600 product family (Westmere-EP) for two-socket servers and the Intel Xeon E7-4800/8800 product family (Westmere-EX) for four and eight-socket servers. Any newer Intel product family will also have AES-NI support. If you are in doubt about whether your processor supports AES-NI, you can look for “AES” in the instructions field output from CPU-Z, like you see in Figure 1.

Figure 1: CPU-Z Output Showing AES Instruction Support

Figure 1: CPU-Z Output Showing AES Instruction Support

After you have encrypted a database with TDE, the runtime impact of TDE is hard to predictably quantify because it absolutely depends on your workload. If for example, your workload fits entirely in the SQL Server buffer pool then there would be essentially zero overhead from TDE. If on the other hand your workload consisted entirely of table scans where the page is read and then flushed almost immediately that would impose the maximum penalty. The maximum penalty for an I/O volatile workload is typically less than 5% with modern hardware and with SQL Server 2016 or later.

The extra work of TDE decryption happens when you read data into the buffer pool from storage, and the extra work of TDE encryption happens when you write the data back out to storage. Making sure you are not under memory pressure, by having a large enough buffer pool and by doing index and query tuning will obviously reduce the performance impact of TDE. TDE does not encrypt FILESTREAM data, and a TDE encrypted database will not use instant file initialization for its data files.

Before SQL Server 2016, TDE and native backup compression did not work well together. With SQL Server 2016 and later, you can use TDE and native backup compression together as long as you specify a MAXTRANSFERSIZE that is greater than 64K in the backup command. It is also very important that you are current with your cumulative updates, since there have been multiple important TDE-related hotfixes for both SQL Server 2016 and SQL Server 2017. Finally, TDE is still and Enterprise Edition only feature, even after SQL Server 2016 SP1 (which added many Enterprise-only features to Standard Edition).

Row-Level Security (RLS)

Row-Level Security (RLS) limits read access and most write-level access based on attributes of the user. RLS uses what is called predicate-based access control. SQL Server applies the access restrictions in the database tier, and they will be applied every time that data access is attempted from any tier.

RLS works by creating a predicate function that limits the rows that a user can access and then using a security policy and security predicate to apply that function to a table.

There are two types of security predicates, which are filter predicates and block predicates. Filter predicates will silently filter the rows available to read operations (SELECT, UPDATE, and DELETE), by essentially adding a WHERE clause that prevents the filtered rows from showing up in the result set. Filter predicates are applied while reading the data from the base table, and the user or application won’t know that rows are being filtered from the results. It is important, from a performance perspective, to have a row store index that covers your RLS filter predicate.

Block predicates explicitly, (with an error message) block write operations (AFTER INSERT, AFTER UPDATE, BEFORE UPDATE, and BEFORE DELETE) that would violate the block predicate.

Filter and block predicates are created as inline table-valued functions. You will also need to use the CREATE SECURITY POLICY T-SQL statement to apply and enable the filtering function to the relevant base table

RLS was added in SQL Server 2016 and is available in all editions of SQL Server 2016 and newer. RLS will not work with Filestream, Polybase, and indexed views. RLS can hurt the performance of Full-Text Search and it can force columnstore indexes queries to end up using row mode instead of batch mode. This Microsoft blog post has more information about the performance impact of RLS. A good example of how to use Query Store to tune RLS performance is here.

Dynamic Data Masking (DDM)

Dynamic data masking (DDM) can help limit sensitive data exposure by masking it to non-privileged users. DDM is applied at the table-level so all queries are affected by the data masking, while the actual masking rules are applied in individual columns in the table.

There are four types of data masks that you can use, which include default, email, custom string, and random. A default mask provides full default masking of the data depending on the data type. For example, a string data type would get a mask of ‘XXXX’ instead of the actual data. An email mask will return the first letter of the actual email address, followed with XXX@XXXX.com, regardless of the actual domain suffix. A custom string mask will show the first and last letters of the string, with a custom padding in the middle. Finally, a random mask is used to mask numeric data and provide a random value in a defined range. Data masks can be created in a CREATE TABLE statement or with an ALTER COLUMN statement.

Dynamic data masking provides no masking for privileged users who can still directly query your tables and see the unmasked data. Masking rules cannot be used with encrypted columns (Always Encrypted), computed columns, or with Filestream data. If there are existing indexes on a column that you want to mask, you will have to drop the index, create the mask on the column, and then recreate the index. It is also possible to infer the values for masked data columns by writing queries that allow the user to eventually guess a value for a masked column.

Dynamic Data Masking was introduced in SQL Server 2016, and it is available in all editions of SQL Server. DDM is not meant to be a strong security measure like actual encryption, but on the other hand, its performance impact appears to be quite neglible.

1 thought on “Security Features in SQL Server 2017”

Comments are closed.

"The initial TDE encryption scan for a user database will use one background CPU thread per data file in the database (if the data files are located on separate logical drives), to slowly read the entire contents of the data file into memory at the rate of about 52MB/second per data file (if the data files are located on separate logical drives)"

What's the behavior if all database and log files sit on the same logical drive? We're looking at turning this on in bulk with around 350 DBs per instance, 3 instances per server, and are trying to figure out how many to do at any one time and what impact it's going to have.

thanks

Steve