When troubleshooting CPU performance issues on virtualized SQL Servers running on VMware, one of the first things I do is verify that the virtual machine configuration isn’t a contributing factor to the performance problem. Where a physical server has 100% of the available resources dedicated to the OS, a virtual machine doesn’t, so looking at a few basic items up front eliminates troubleshooting the wrong issue and wasting time. In the past I’ve blogged about the importance of DBAs having read-only access to Virtual Center for VMware for basic troubleshooting of performance problems. However, even without access to Virtual Center, it’s still possible to find out some basic information inside of Windows that could lead to potential host level issues that are affecting performance.

Every VMware virtual machine has two performance counter groups in Windows that are added when the VMware tools are installed in the guest; VM Processor and VM Memory. These performance counters are one of the first things I look at whenever I am working with a virtual machine on VMware, because they give you a look at what resources the VM is receiving from the hypervisor. The VM Processor group has the following counters:

- % Processor Time

- Effective VM Speed in MHz

- Host processor speed in MHz

- Limit in MHz

- Reservation in MHz

- Shares

On a VM guest that is showing a high Processor\% Processor Time in Task Manager or perfmon, checking the VM Processor counters will give an accurate account of actual resource allocations the VM guest is receiving. If the Host processor speed in MHz is 3000 and the guest has 8 virtual CPUs allocated to it, then the maximum effective speed for the VM is 24000 MHz and the Effective VM Speed in MHz counter will reflect whether the VM is actually getting the resources from the host. Usually when this is the case, you will need to start looking at the host level information to diagnose the root cause of the issue further. But in a recent client engagement, this didn’t turn out to be the case.

The client VM in this case matched the configuration described above and had a maximum effective speed of 24000 MHz but the Effective VM Speed in MHz counter was only averaging around 6900 MHz with the VM Windows Percent Processor time pegged at nearly 100%. Looking just below the Effective VM Speed in MHz counter revealed the cause of the issue: the Limit in MHz was 7000, meaning that the VM had a configured cap of CPU usage at 7000MHz in ESX, so it was consistently being throttled by the hypervisor under load.

The explanation for this was that this particular VM had been used for testing purposes in a proof of concept and was originally co-located on a busy VM host; the VM administrators didn’t want an unknown workload causing performance issues on that host. So, to ensure that it wouldn’t negatively impact the real production workloads on the host during the POC, it was limited to allow only 7000 MHz of CPU or the equivalent of 2 1/3 physical cores on the host. Ultimately, removing the VM CPU Limit in ESX eliminated the high CPU issues within Windows, and the performance problems that the client was experiencing went away.

The VM Memory counter group is just as important as the VM Processor group for identifying potential performance problems for SQL Server. The VM Memory counter group contains the following counters:

- Memory Active in MB

- Memory Ballooned in MB

- Memory Limit in MB

- Memory Mapped in MB

- Memory Overhead in MB

- Memory Reservation in MB

- Memory Shared in MB

- Memory Shared Saved in MB

- Memory Shares

- Memory Swapped in MB

- Memory Used in MB

Of these counters, the ones that I specifically look at are the Memory Ballooned in MB and the Memory Swapped in MB, both of which should be zero for SQL Server workloads. The Memory Ballooned in MB counter tells how much memory has been reclaimed from the guest VM by the balloon driver due to memory overcommit on the host, which will cause SQL Server to reduce memory usage to respond to memory pressure in Windows caused by the balloon driver inflating to take memory away from the VM. The Memory Swapped in MB counter is tracking how much memory was paged to disk by the host hypervisor due to memory overcommit on the host that couldn’t be resolved by ballooning VM guests with the balloon driver. VMware’s best practice guide for SQL Server recommends using reservations to guarantee that SQL Server doesn’t get ballooned or paged out for performance reasons but many VM administrators are hesitant to set static reservations because it reduces environmental flexibility.

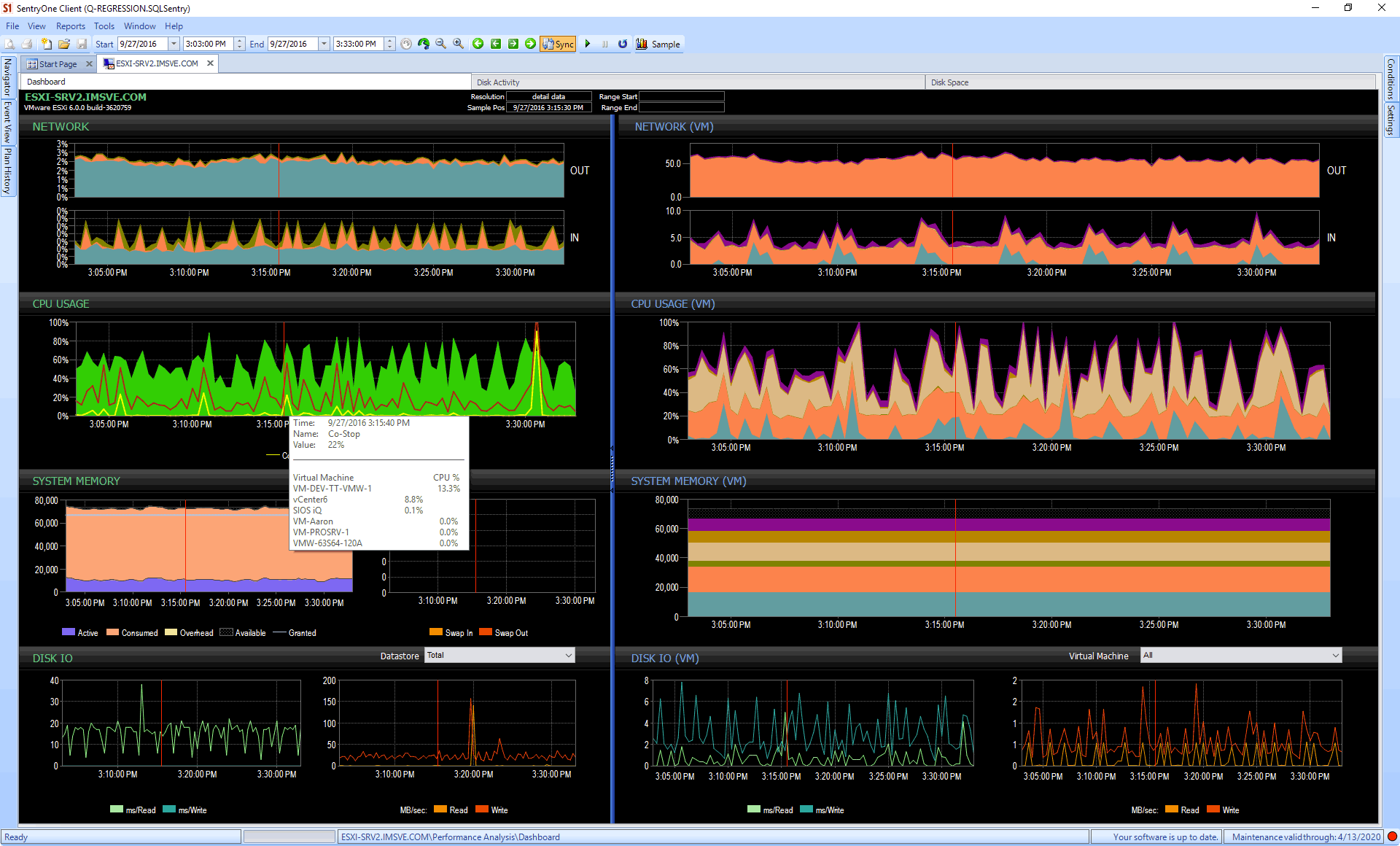

Monitoring tools, like SentryOne V Sentry, can also help. Consider the case where you might not have direct access to vCenter, but someone can set up monitoring against it on your behalf. Now you can get great visualization and insight into CPU, memory, and even disk issues – at both the guest and host level – and all the history that comes with that, too. On the dashboard below, you can see host metrics on the left (including CPU breakdowns for co-stop and ready time), and guest metrics on the right:

To try out this and other functionality from SentryOne, you can download a free trial.

Conclusion

When troubleshooting performance issues on virtualized SQL Servers on VMware, it is important to look at the problem from a holistic standpoint instead of doing “knee-jerk” troubleshooting using only limited information. The VMware-specific counters in Performance Monitor can be a great way to quickly verify that the VM is getting the basic resource allocations from the host, before taking further steps at troubleshooting the issue.

There is a typo in this – you have 24,000 Mhz which should be 2,400 Mhz

There isn't a typo, 8 vCPUS times 3000MHz is 8×3000=24,000MHz.

I agree that the "physical" capacity is 8*3k, but you have the deduct the reservation for the ESXi host.

Nothing in this post provides the host hardware configuration, which was 2 sockets, 6 cores each, with hyperthreading enabled at 3.0GHz. Only the VM configuration is addressed in the post which is smaller than host resources, hypervisor overheads don't affect the VM maximum possible clock speed calculation based on how it's configured.

Awesome!!!

Recently I came across the similar issue where server response improved where we were expecting a degradation, due to installation of a product agents.

Ultimately, it turned out that ESX sever was manipulating VM resources.

Dear Jonathon- Could you also guide for similar set of VM counters in Microsoft HyperV (instead of VMWare)? As at my workplace, virtual environments for SQL Server are there on both VMWare and HyperV.

Thanks a lot for this article.

Hey Channdeep,

I can write a future article on this, but Hyper-V would require the ability to monitor counters on the host with PerfMon, as there aren't equivalent in-guest counters to what a VMware guest has with the tools installed.

Great Post Jonathan. I was not aware of the VM counters in Windows guests.

Great info shared in this blog. Keep sharing.

Great post. Could you please provide best implementation articles for SQL Server 2016 on VMWare latest versions?

Thanks

Hi, Jonathan. Have you ever gotten to that equiv article on hyper-v? Thanks very much for this one, of course.