@rob_farley your recent stackoverflow solution to ordering by a value first then a field is genius! Wanted to thank you personally.

— Joel Sacco (@Jsac90) August 11, 2016

I saw this tweet come through…

And it made me look at what it was referring to, because I hadn't written anything 'recently' on StackOverflow about ordering data. Turns out it was this answer I'd written, which although wasn't the accepted answer, has collected over a hundred votes.

The person asking the question had a very simple problem – wanting to get certain rows to appear first. And my solution was simple:

ORDER BY CASE WHEN city = 'New York' THEN 1 ELSE 2 END, City;

It seems to have been a popular answer, including for Joel Sacco (according to that tweet above).

The idea is to form an expression, and order by that. ORDER BY doesn't care whether it's an actual column or not. You could've done the same using APPLY, if you really prefer to use a 'column' in your ORDER BY clause.

SELECT Users.*

FROM Users

CROSS APPLY

(

SELECT CASE WHEN City = 'New York' THEN 1 ELSE 2 END

AS OrderingCol

) o

ORDER BY o.OrderingCol, City;

If I use some queries against WideWorldImporters, I can show you why these two queries really are exactly the same. I'm going to query the Sales.Orders table, asking for the Orders for Salesperson 7 to appear first. I'm also going to create an appropriate covering index:

CREATE INDEX rf_Orders_SalesPeople_OrderDate

ON Sales.Orders(SalespersonPersonID) INCLUDE (OrderDate);

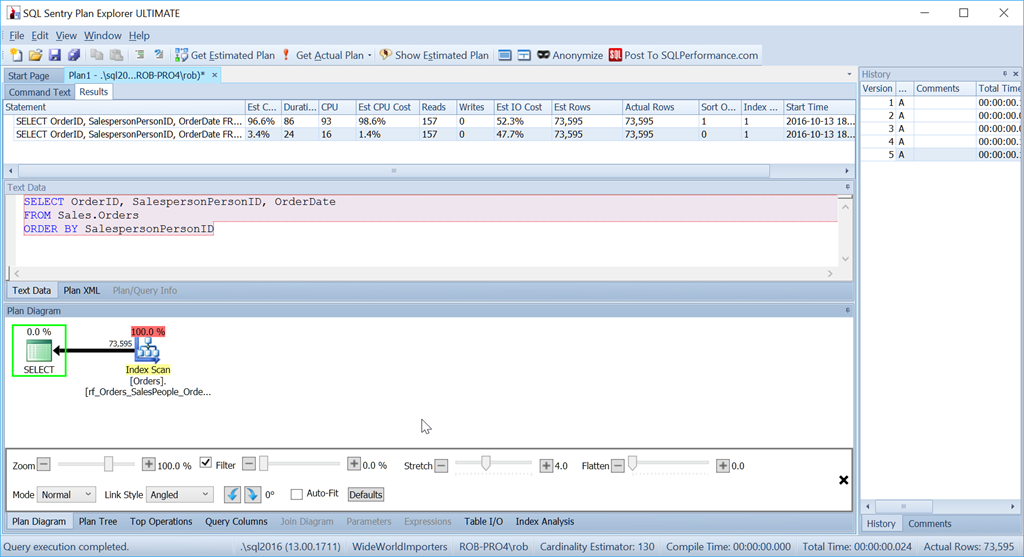

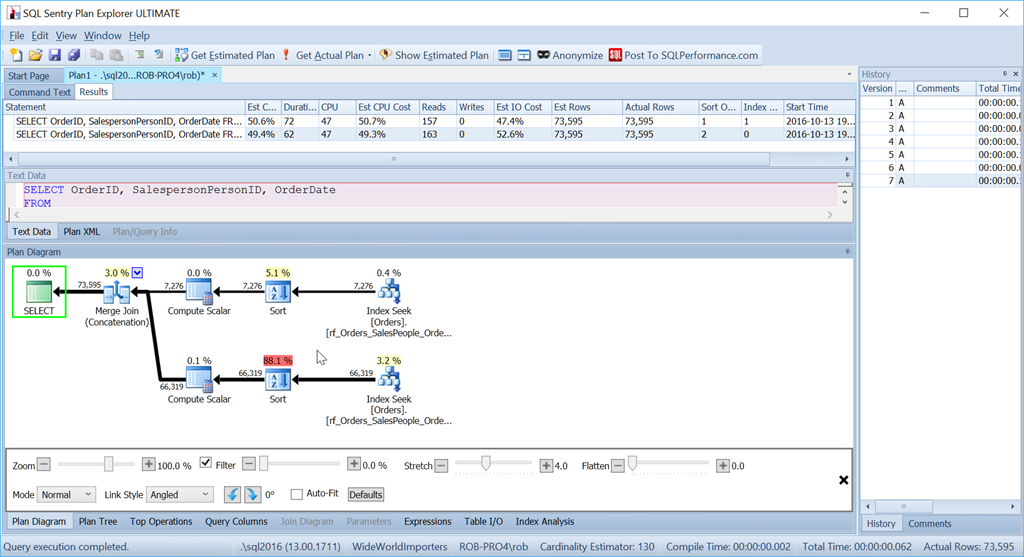

The plans for these two queries look identical. They perform identically – same reads, same expressions, they really are the same query. If there's a slight difference in the actual CPU or Duration, then that's a fluke because of other factors.

SELECT OrderID, SalespersonPersonID, OrderDate

FROM Sales.Orders

ORDER BY CASE WHEN SalespersonPersonID = 7 THEN 1 ELSE 2 END, SalespersonPersonID;

SELECT OrderID, SalespersonPersonID, OrderDate

FROM Sales.Orders

CROSS APPLY

(

SELECT CASE WHEN SalespersonPersonID = 7 THEN 1 ELSE 2 END

AS OrderingCol

) o

ORDER BY o.OrderingCol, SalespersonPersonID;

And yet this is not the query that I would actually use in this situation. Not if performance were important to me. (It usually is, but it's not always worth writing a query the long way if the amount of data is small.)

What bothers me is that Sort operator. It's 96.4% of the cost!

Consider if we simply want to order by SalespersonPersonID:

We see that this simpler query's estimated CPU cost is 1.4% of the batch, while the custom-sorted version's is 98.6%. That's SEVENTY TIMES worse. Reads are the same though – that's good. Duration is way worse, and so is CPU.

I'm not fond of Sorts. They can be nasty.

One option I have here is to add a computed column to my table and index that, but that's going to have an impact on anything which looks for all the columns on the table, such as ORMs, Power BI, or anything that does SELECT *. So that's not so great (although if we ever get to add hidden computed columns, that would make for a really nice option here).

Another option, which is more longwinded (some might suggest that would suit me – and if you thought that: Oi! Don't be so rude!), and uses more reads, is to consider what we'd do in real life if we needed to do this.

If I had a pile of 73,595 orders, sorted by Salesperson order, and I needed to return them with a particular Salesperson first, I wouldn't disregard the order they were in and simply sort them all, I'd start by diving in and finding the ones for Salesperson 7 – keeping them in the order they were in. Then I'd find the ones that weren't the ones that weren't Salesperson 7 – putting them next, and again keeping them in the order they were already in.

In T-SQL, that's done like this:

SELECT OrderID, SalespersonPersonID, OrderDate

FROM

(

SELECT OrderID, SalespersonPersonID, OrderDate,

1 AS OrderingCol

FROM Sales.Orders

WHERE SalespersonPersonID = 7

UNION ALL

SELECT OrderID, SalespersonPersonID, OrderDate,

2 AS OrderingCol

FROM Sales.Orders

WHERE SalespersonPersonID != 7

) o

ORDER BY o.OrderingCol, o.SalespersonPersonID;

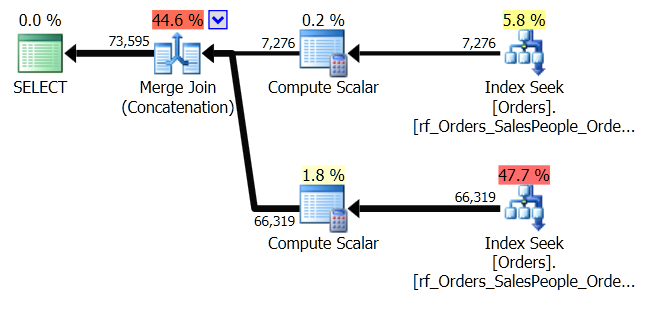

This gets two sets of data and concatenates them. But the Query Optimizer can see that it needs to maintain the SalespersonPersonID order, once the two sets are concatenated, so it does a special kind of concatenation that maintains that order. It's a Merge Join (Concatenation) join, and the plan looks like this:

You can see it's a lot more complicated. But hopefully you'll also notice that there's no Sort operator. The Merge Join (Concatenation) pulls the data from each branch, and produces a dataset which is in the right order. In this case, it will pull all 7,276 rows for Salesperson 7 first, and then pull the other 66,319, because that's the required order. Within each set, the data is in SalespersonPersonID order, which is maintained as the data flows through.

I mentioned earlier that it uses more reads, and it does. If I show the SET STATISTICS IO output, comparing the two queries, I see this:

Table 'Orders'. Scan count 1, logical reads 157, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

Table 'Orders'. Scan count 3, logical reads 163, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

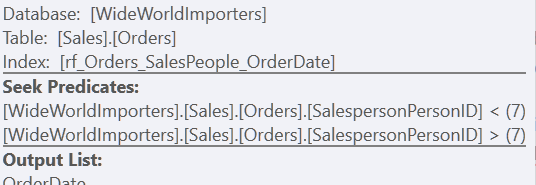

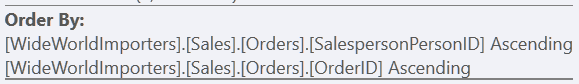

Using the "Custom Sort" version, it's just one scan of the index, using 157 reads. Using the "Union All" method, it's three scans – one for SalespersonPersonID = 7, one for SalespersonPersonID < 7, and one for SalespersonPersonID > 7. We can see those last two by looking at the properties of the second Index Seek:

For me, though, the benefit comes through in the lack of a Worktable.

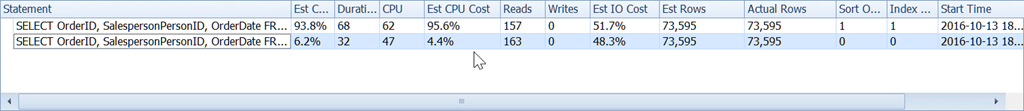

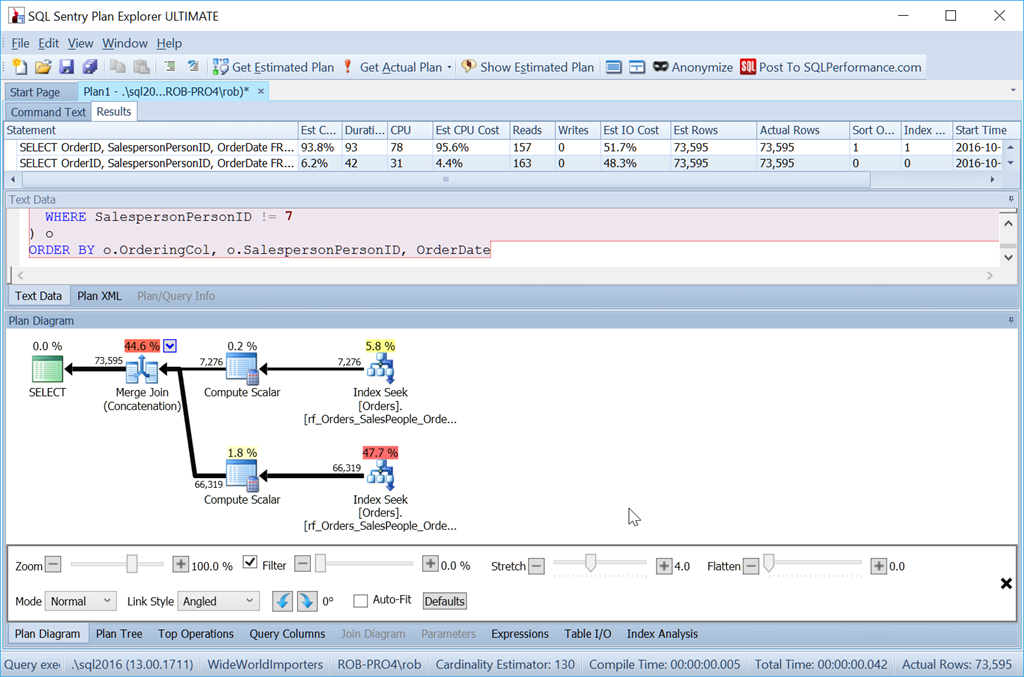

Look at the estimated CPU cost:

It's not as small as our 1.4% when we avoid the sort completely, but it's still a vast improvement over our Custom Sort method.

But a word of warning…

Suppose I had created that index differently, and had OrderDate as a key column rather than as an included column.

CREATE INDEX rf_Orders_SalesPeople_OrderDate

ON Sales.Orders(SalespersonPersonID, OrderDate);

Now, my "Union All" method doesn't work as intended at all.

Despite using exactly the same queries as before, my nice plan now has two Sort operators, and it performs nearly as badly as my original Scan + Sort version.

The reason for this is a quirk of the Merge Join (Concatenation) operator, and the clue is in the Sort operator.

It's ordering by SalespersonPersonID followed by OrderID – which is the clustered index key of the table. It chooses this because this is known to be unique, and it's a smaller set of columns to sort by than SalespersonPersonID followed by OrderDate followed by OrderID, which is the dataset order produced by three index range scans. One of those times when the Query Optimizer doesn't notice a better option that's right there.

With this index, we would need our dataset ordered by OrderDate as well to produce our preferred plan.

SELECT OrderID, SalespersonPersonID, OrderDate

FROM

(

SELECT OrderID, SalespersonPersonID, OrderDate,

1 AS OrderingCol

FROM Sales.Orders

WHERE SalespersonPersonID = 7

UNION ALL

SELECT OrderID, SalespersonPersonID, OrderDate,

2 AS OrderingCol

FROM Sales.Orders

WHERE SalespersonPersonID != 7

) o

ORDER BY o.OrderingCol, o.SalespersonPersonID, OrderDate;

So it's definitely more effort. The query is longer for me to write, it's more reads, and I have to have an index without extra key columns. But it's certainly quicker. With even more rows, the impact is bigger still, and I don't have to risk a Sort spilling to tempdb either.

For small sets, my StackOverflow answer is still good. But when that Sort operator is costing me in performance, then I'm going with the Union All / Merge Join (Concatenation) method.

Hi Rob,

I'm testing this on AdventureWorks2014 and I realised that the 3VL has to be added in the second WHERE clause of the UNION operator:

SELECT

SalesOrderID

, SalesPersonID

, OrderDate

FROM

(

SELECT

SalesOrderID

, SalesPersonID

, OrderDate

, 1 AS OrderingCol

FROM Sales.SalesOrderHeader

WHERE SalesPersonID = 282

UNION ALL

SELECT

SalesOrderID

, SalesPersonID

, OrderDate

, 2 AS OrderingCol

FROM Sales.SalesOrderHeader

WHERE

(

SalesPersonID != 282

OR SalesPersonID IS NULL — 3VL

)

) AS O

ORDER BY

O.OrderingCol

, O.SalesPersonID

–, OrderDate;

GO

Yes – if you need to deal with NULLs, then you don't get to use !=

Superb article! Very interesting, well researched and thoughtfully presented.

Did you run a test changing the amount of rows up or down by a couple of orders of magnitude? 7 million instead of 70,000 and 700 instead of 70,000.

Thanks Scott – glad you liked it.

Not specifically for this post. But I know that Sorts don't scale at all well, and that Concatenation scales very well. So I would expect to see little benefit when dealing with 700 rows, and massive benefit when dealing with 7M rows.

Sweet post – I've followed the steps but I'm seeing parallelism in my plan. Did you adjust cost threshold?

Whenever I'm teaching about plans I try to avoid parallelism being a factor. To do this I might have set Maximum Degree of Parallelism to 1, or pushed CTfP up. But in general, I would recommend not leaving those values at their defaults. As a starter set MDoP to 4 and CTfP to 50. You'll probably find that would stop all these simple plans from going parallel.

Such a wonderful explanation Sir. I have been puzzled with such issues a lot of times finally an approach in my TSQL arsenal. :)