Internals of the Seven SQL Server Sorts – Part 1

As far as graphical execution plans are concerned, there is just one icon for a physical sort in SQL Server:

This same icon is used for the three logical sort operators: Sort, Top N Sort, and Distinct Sort:

Going a level deeper, there are four different implementations of Sort in the execution engine (not counting batch sorting for optimized loop joins, which is not a full sort, and not visible in plans anyway). If you are using SQL Server 2014, the number of execution engine Sort implementations increases to seven:

- CQScanSortNew

- CQScanTopSortNew

- CQScanIndexSortNew

- CQScanPartitionSortNew (SQL Server 2014 only)

- CQScanInMemSortNew

- In-Memory OLTP (Hekaton) natively compiled procedure Top N Sort (SQL Server 2014 only)

- In-Memory OLTP (Hekaton) natively compiled procedure General Sort (SQL Server 2014 only)

This article looks at these sort implementations and when each is used in SQL Server. Part one covers the first four items on the list.

1. CQScanSortNew

This is the most general sort class, used when none of the other available options is applicable. General sort uses a workspace memory grant reserved just before query execution begins. This grant is proportional to cardinality estimates and average row size expectations, and cannot be increased after query execution begins.

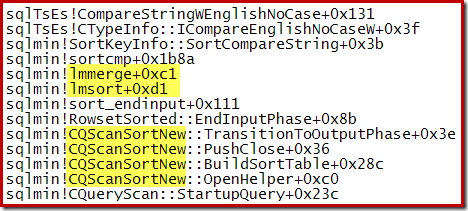

The current implementation appears to use a variety of internal merge sort (perhaps binary merge sort), transitioning to external merge sort (with multiple passes if necessary) if the reserved memory turns out to be insufficient. External merge sort uses physical tempdb space for sort runs that do not fit in memory (commonly known as a sort spill). General sort may also be configured to apply distinctness during the sorting operation.

The following partial stack trace shows an example of the CQScanSortNew class sorting strings using an internal merge sort:

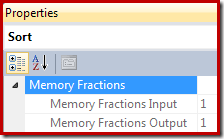

In execution plans, Sort provides information about the fraction of the overall query workspace memory grant that is available to the Sort when reading records (the input phase), and the fraction available when sorted output is being consumed by parent plan operators (the output phase).

The memory grant fraction is a number between 0 and 1 (where 1 = 100% of the granted memory) and is visible in SSMS by highlighting the Sort and looking in the Properties window. The example below was taken from a query with only a single Sort operator, so it has the full query workspace memory grant available during both input and output phases:

The memory fractions reflect the fact that during its input phase, Sort has to share the overall query memory grant with concurrently-executing memory-consuming operators below it in the execution plan. Similarly, during the output phase, Sort has to share granted memory with concurrently-executing memory-consuming operators above it in the execution plan.

The query processor is smart enough to know that some operators are blocking (stop-and-go), effectively marking boundaries where the memory grant can be recycled and reused. In parallel plans, the memory grant fraction available to a general Sort is split evenly between threads, and cannot be rebalanced at runtime in case of skew (a common cause of spilling in parallel sort plans).

SQL Server 2012 and later includes additional information about the minimum workspace memory grant required to initialize memory-consuming plan operators, and the desired memory grant (the "ideal" amount of memory estimated to be needed to complete the whole operation in memory). In a post-execution ("actual") execution plan, there is also new information about any delays in acquiring the memory grant, the maximum amount of memory actually used, and how the memory reservation was distributed across NUMA nodes.

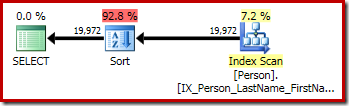

The following AdventureWorks examples all use a CQScanSortNew general sort:

-- An Ordinary Sort (CQScanSortNew)

SELECT

P.FirstName,

P.MiddleName,

P.LastName

FROM Person.Person AS P

ORDER BY

P.FirstName,

P.MiddleName,

P.LastName;

-- Distinct Sort (also CQScanSortNew)

SELECT DISTINCT

P.FirstName,

P.MiddleName,

P.LastName

FROM Person.Person AS P

ORDER BY

P.FirstName,

P.MiddleName,

P.LastName;

-- Same query expressed using GROUP BY

-- Same Distinct Sort (CQScanSortNew) execution plan

SELECT

P.FirstName,

P.MiddleName,

P.LastName

FROM Person.Person AS P

GROUP BY

P.FirstName,

P.MiddleName,

P.LastName

ORDER BY

P.FirstName,

P.MiddleName,

P.LastName;

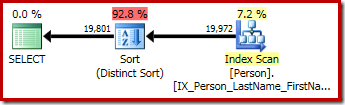

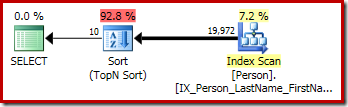

The first query (a non-distinct sort) produces the following execution plan:

The second and third (equivalent) queries produce this plan:

CQScanSortNew can be used for both logical general Sort and logical Distinct Sort.

2. CQScanTopSortNew

CQScanTopSortNew is a subclass of CQScanSortNew used to implement a Top N Sort (as the name suggests). CQScanTopSortNew delegates much of the core work to CQScanSortNew, but modifies the detailed behaviour in different ways, depending on the value of N.

For N > 100, CQScanTopSortNew is essentially just a regular CQScanSortNew sort that automatically stops producing sorted rows after N rows. For N <= 100, CQScanTopSortNew retains only the current Top N results during the sort operation, and keeps track of the lowest key value that currently qualifies.

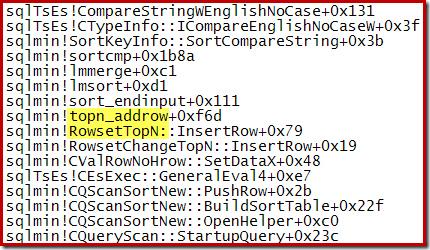

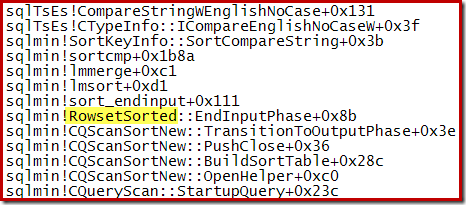

For example, during an optimized Top N Sort (where N <= 100) the call stack features RowsetTopN whereas with the general sort in section 1 we saw RowsetSorted:

For a Top N Sort where N > 100, the call stack at the same stage of execution is the same as the general sort seen earlier:

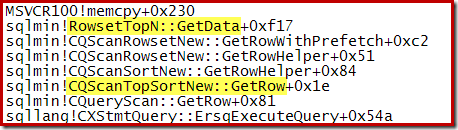

Notice that the CQScanTopSortNew class name does not appear in either of those stack traces. This is simply due to the way sub-classing works. At other points during the execution of these queries, CQScanTopSortNew methods (e.g. Open, GetRow, and CreateTopNTable) do appear explicitly on the call stack. As an example, the following was taken at a later point in query execution and does show the CQScanTopSortNew class name:

Top N Sort and the Query Optimizer

The query optimizer knows nothing about Top N Sort, which is an execution engine operator only. When the optimizer produces an output tree with a physical Top operator immediately above a (non-distinct) physical Sort, a post-optimization rewrite can collapse the two physical operations into a single Top N Sort operator. Even in the N > 100 case, this represents a saving over passing rows iteratively between a Sort output and a Top input.

The following query uses a couple of undocumented trace flags to show the optimizer output and the post-optimization rewrite in action:

SELECT TOP (10)

P.FirstName,

P.MiddleName,

P.LastName

FROM Person.Person AS P

ORDER BY

P.FirstName,

P.MiddleName,

P.LastName

OPTION (QUERYTRACEON 3604, QUERYTRACEON 8607, QUERYTRACEON 7352);

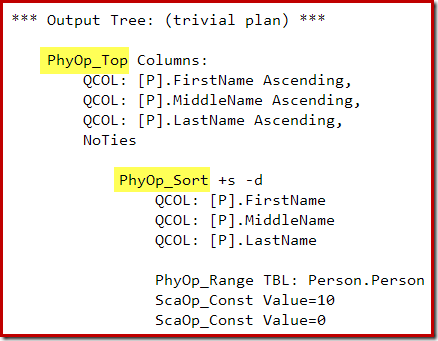

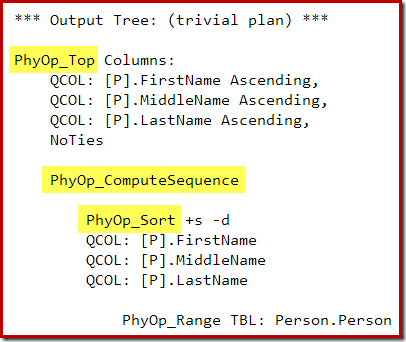

The optimizer's output tree shows separate physical Top and Sort operators:

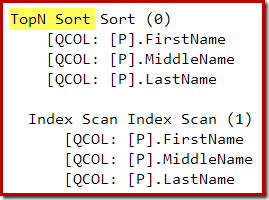

After the post-optimization rewrite, the Top and Sort have been collapsed into a single Top N Sort:

The graphical execution plan for the T-SQL query above shows the single Top N Sort operator:

Breaking the Top N Sort rewrite

The Top N Sort post-optimization rewrite can only collapse an adjacent Top and non-distinct Sort into a Top N Sort. Adding DISTINCT (or the equivalent GROUP BY clause) to the query above will prevent the Top N Sort rewrite:

SELECT DISTINCT TOP (10)

P.FirstName,

P.MiddleName,

P.LastName

FROM Person.Person AS P

ORDER BY

P.FirstName,

P.MiddleName,

P.LastName;

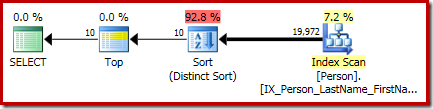

The final execution plan for this query features separate Top and Sort (Distinct Sort) operators:

The Sort there is the general CQScanSortNew class running in distinct mode as seen in section 1 earlier.

A second way to prevent the rewrite to a Top N Sort is to introduce one or more additional operators between the Top and the Sort. For example:

SELECT TOP (10)

P.FirstName,

P.MiddleName,

P.LastName,

rn = RANK() OVER (ORDER BY P.FirstName)

FROM Person.Person AS P

ORDER BY

P.FirstName,

P.MiddleName,

P.LastName;

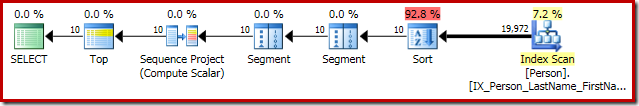

The query optimizer's output now happens to have an operation between the Top and the Sort, so a Top N Sort is not generated during the post-optimization rewrite phase:

The execution plan is:

The compute sequence (implemented as two Segments and a Sequence Project) between the Top and Sort prevents the collapse of the Top and Sort to a single Top N Sort operator. Correct results will still be obtained from this plan of course, but execution may be a little less efficient than it could have been with the combined Top N Sort operator.

3. CQScanIndexSortNew

CQScanIndexSortNew is used only for sorting in DDL index building plans. It reuses some of the general sort facilities we have already seen, but adds specific optimizations for index insertions. It is also the only sort class that can dynamically request more memory after execution has begun.

Cardinality estimation is often accurate for an index building plan because the total number of rows in the table is usually a known quantity. That is not to say that memory grants for index building plan sorts will always be accurate; it just makes it a little less easy to demo. So, the following example uses an undocumented, but reasonably well-known, extension to the UPDATE STATISTICS command to fool the optimizer into thinking the table we are building an index on only has one row:

-- Test table

CREATE TABLE dbo.People

(

FirstName dbo.Name NOT NULL,

LastName dbo.Name NOT NULL

);

GO

-- Copy rows from Person.Person

INSERT dbo.People WITH (TABLOCKX)

(

FirstName,

LastName

)

SELECT

P.FirstName,

P.LastName

FROM Person.Person AS P;

GO

-- Pretend the table only has 1 row and 1 page

UPDATE STATISTICS dbo.People

WITH ROWCOUNT = 1, PAGECOUNT = 1;

GO

-- Index building plan

CREATE CLUSTERED INDEX cx

ON dbo.People (LastName, FirstName);

GO

-- Tidy up

DROP TABLE dbo.People;

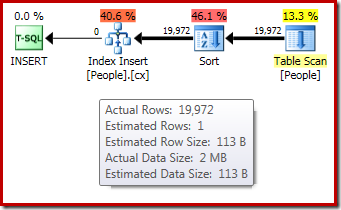

The post-execution ("actual") execution plan for the index build does not show a warning for a spilled sort (when run on SQL Server 2012 or later) despite the 1-row estimate and the 19,972 rows actually sorted:

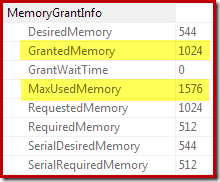

Confirmation that the initial memory grant was dynamically expanded comes from looking at the root iterator's properties. The query was initially granted 1024KB of memory, but ultimately consumed 1576KB:

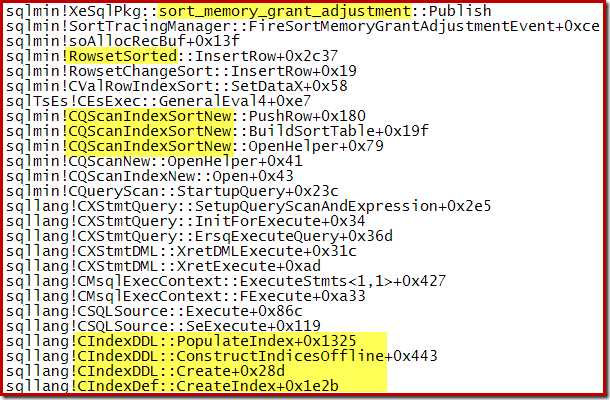

The dynamic increase in granted memory can also be tracked using the Debug channel Extended Event sort_memory_grant_adjustment. This event is generated each time the memory allocation is dynamically increased. If this event is being monitored, we can capture a stack trace when it is published, either via Extended Events (with some awkward configuration and a trace flag) or from an attached debugger, as below:

Dynamic memory grant expansion can also help with parallel index build plans where the distribution of rows across threads is uneven. The amount of memory that can be consumed this way is not unlimited, however. SQL Server checks each time an expansion is needed to see if the request is reasonable given the resources available at that time.

Some insight to this process can be obtained by enabling undocumented trace flag 1504, together with 3604 (for message output to the console) or 3605 (output to the SQL Server error log). If the index build plan is parallel, only 3605 is effective because parallel workers cannot send trace messages cross-thread to the console.

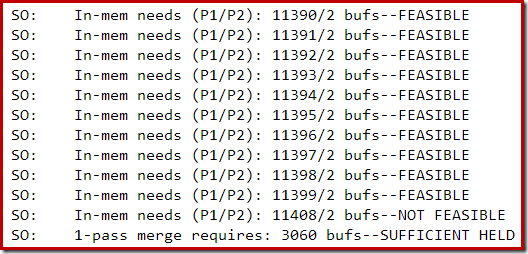

The following section of trace output was captured while building a moderately large index on a SQL Server 2014 instance with limited memory:

Memory expansion for the sort proceeded until the request was considered infeasible, at which point it was determined that enough memory was already held for a single-pass sort spill to complete.

4. CQScanPartitionSortNew

This class name might suggest that this type of sort is used for partitioned table data, or when building indexes on partitioned tables, but neither of those is actually the case. Sorting partitioned data uses CQScanSortNew or CQScanTopSortNew as normal; sorting rows for insertion to a partitioned index generally uses CQScanIndexSortNew as seen in section 3.

The CQScanPartitionSortNew sort class is only present in SQL Server 2014. It is only used when sorting rows by partition id, prior to insertion into a partitioned clustered columnstore index. Note that it is only used for partitioned clustered columnstore; regular (non-partitioned) clustered columnstore insert plans do not benefit from a sort.

Inserts into a partitioned clustered columnstore index will not always feature a sort. It is a cost-based decision that depends on the estimated number of rows to be inserted. If the optimizer estimates that it is worth sorting the inserts by partition to optimize I/O, the columnstore insert operator will have the DMLRequestSort property set to true, and a CQScanPartitionSortNew sort may appear in the execution plan.

The demo in this section uses a permanent table of sequential numbers. If you do not have one of those, the following script can be used to create one:

-- Itzik Ben-Gan's row generator

WITH

L0 AS (SELECT 1 AS c UNION ALL SELECT 1),

L1 AS (SELECT 1 AS c FROM L0 AS A CROSS JOIN L0 AS B),

L2 AS (SELECT 1 AS c FROM L1 AS A CROSS JOIN L1 AS B),

L3 AS (SELECT 1 AS c FROM L2 AS A CROSS JOIN L2 AS B),

L4 AS (SELECT 1 AS c FROM L3 AS A CROSS JOIN L3 AS B),

L5 AS (SELECT 1 AS c FROM L4 AS A CROSS JOIN L4 AS B),

Nums AS (SELECT ROW_NUMBER() OVER(ORDER BY (SELECT NULL)) AS n FROM L5)

SELECT

-- Destination column type integer NOT NULL

ISNULL(CONVERT(integer, N.n), 0) AS n

INTO dbo.Numbers

FROM Nums AS N

WHERE N.n >= 1

AND N.n <= 1000000

OPTION (MAXDOP 1);

GO

ALTER TABLE dbo.Numbers

ADD CONSTRAINT PK_Numbers_n

PRIMARY KEY CLUSTERED (n)

WITH (SORT_IN_TEMPDB = ON, MAXDOP = 1, FILLFACTOR = 100);

The demo itself involves creating a partitioned clustered columnstore indexed table, and inserting enough rows (from the Numbers table above) to convince the optimizer to use a pre-insert partition sort:

CREATE PARTITION FUNCTION PF (integer)

AS RANGE RIGHT

FOR VALUES (1000, 2000, 3000);

GO

CREATE PARTITION SCHEME PS

AS PARTITION PF

ALL TO ([PRIMARY]);

GO

-- A partitioned heap

CREATE TABLE dbo.Partitioned

(

col1 integer NOT NULL,

col2 integer NOT NULL DEFAULT ABS(CHECKSUM(NEWID())),

col3 integer NOT NULL DEFAULT ABS(CHECKSUM(NEWID()))

)

ON PS (col1);

GO

-- Convert heap to partitioned clustered columnstore

CREATE CLUSTERED COLUMNSTORE INDEX ccsi

ON dbo.Partitioned

ON PS (col1);

GO

-- Add rows to the partitioned clustered columnstore table

INSERT dbo.Partitioned (col1)

SELECT N.n

FROM dbo.Numbers AS N

WHERE N.n BETWEEN 1 AND 4000;

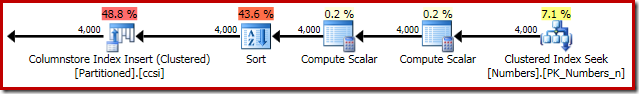

The execution plan for the insert shows the sort used to ensure rows arrive at the clustered columnstore insert iterator in partition id order:

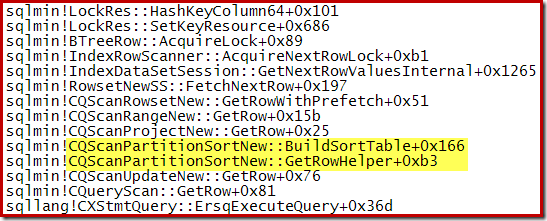

A call stack captured while the CQScanPartitionSortNew sort was in progress is shown below:

There is something else interesting about this sort class. Sorts normally consume their entire input in their Open method call. After sorting, they return control to their parent operator. Later, the sort starts to produce sorted output rows one at a time in the usual way via GetRow calls. CQScanPartitionSortNew is different, as you can see in the call stack above: It does not consume its input during its Open method – it waits until GetRow is called by its parent for the first time.

Not every sort on partition id that appears in an execution plan inserting rows into a partitioned clustered columnstore index will be a CQScanPartitionSortNew sort. If the sort appears immediately to the right of the columnstore index insert operator, the chances are very good that it is a CQScanPartitionSortNew sort.

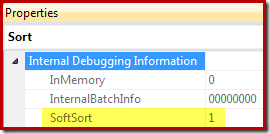

Finally, CQScanPartitionSortNew is one of only two sort classes that sets the Soft Sort property exposed when Sort operator execution plan properties are generated with undocumented trace flag 8666 enabled:

A "soft sort" uses only its primary memory grant and never spills. It doesn't guarantee fully-sorted output. Each sort run using the available memory grant will be sorted. A "soft sort" represents a best effort given the resource available. This property can be used to infer that a Sort is implemented with CQScanPartitionSortNew without attaching a debugger. The meaning of the InMemory property flag shown above will be covered in part 2. It does not indicate whether a regular sort was performed in memory or not.

A soft sort is acceptable when sorting by partition id because this is purely a performance optimization to avoid switching between partitions more than once when maintaining a partitioned index. Without a fully effective sort, performance may be reduced by increased partition switches but the end result will still be correct.

Summary of Part One

- CQScanSortNew is the general sort class used when no other option is applicable. It appears uses a variety of internal merge sort in memory, transitioning to external merge sort using tempdb if granted memory workspace turns out to be insufficient. This class can be used for General Sort and Distinct Sort.

- CQScanTopSortNew implements Top N Sort. Where N <= 100, an in-memory internal merge sort is performed, and never spills to tempdb. Only the current top n items are retained in memory during the sort. For N > 100 CQScanTopSortNew is equivalent to a CQScanSortNew sort that automatically stops after N rows have been output. An N > 100 sort can spill to tempdb if necessary.

- The Top N Sort seen in execution plans is a post-query-optimization rewrite. If the query optimizer produces an output tree with an adjacent Top and non-distinct Sort, this rewrite can collapse the two physical operators into a single Top N Sort operator.

- CQScanIndexSortNew is used only in index building DDL plans. It is the only standard sort class that can dynamically acquire more memory during execution. Index building sorts can still spill to disk in some circumstances, including when SQL Server decides a requested memory increase is not compatible with the current workload.

- CQScanPartitionSortNew is only present in SQL Server 2014 and is used only to optimize inserts to a partitioned clustered columnstore index. It delivers a "soft sort".

The second part of this article will look at CQScanInMemSortNew, and the two In-Memory OLTP natively compiled stored procedure sorts.

6 thoughts on “Internals of the Seven SQL Server Sorts – Part 1”

Comments are closed.

Maybe the soft sort is a partial sorting. The CS index really only needs sorted chunks of 1 million rows at a time. It does not matter in what partition order. Also we can alternate between partitions easily as long as we get nice 1m row chunks that target one partition each time.

I'd like to see a partial sort operation just like the hash aggregate (partial) operator. It could be used to optimize key lookup. That operator would never spill. In case memory was not enough it would simply emit the current batch.

Also, the hard-coded TOP 100 thing is really unnerving to me. The perf loss when you go to 101 can be orders of magnitude because you might end up spilling multiple times now. O(N) becomes O(log n) when you go over 100. Why not use a dynamic value? For example as many rows as can be sorted without spilling.

The next unnerving thing about sorting is that it *should* generate purely sequential reads and writes to tempdb but it often does not. This can be seen by looking at the OS level IO counters. The sum of reads and writes almost never reaches the sequential bandwidth of my desktop magnetic disk. It can do like 150 MB/s and I usually see SQL Server sort at 10-50 MB/s. That must be due to random IO since the CPU is not saturated in any way.

It is hard to understand how such a fundamental operator can be so unoptimized. The necessary tuning work probably isn't even that hard to labor-intensive. There just has to be somebody to even take a look.

There is already a "best effort" batch sort for loops join (mentioned in the introduction and also see http://blogs.msdn.com/b/craigfr/archive/2009/03/18/optimized-nested-loops-joins.aspx).

Yes the hard-coding of 100 is arbitrary, but one of the advantages of SQL Server is that it has fewer arcane tuning "knobs" than some other products. This can be frustrating for advanced tuning, I agree.

SQL Server sorting is optimized in some clever ways, but it is fair to say that some of those optimizations might be out of date by now. See for example http://citeseerx.ist.psu.edu/viewdoc/summary?doi=10.1.1.83.1080

It will be interesting to see what improvements have been made in SQL Server 2016, now that batch mode sorting has been announced.

I love reading articles by Paul.

Just another stellar article.

Thanks Paul.

Hey Paul, thanks for the nice article.

Interestingly, the example in section 4. CQScanPartitionSortNew doesn't require a sort in SQL Server 2016 (tested on CTP 2.1). That's even when testing with a large number of rows such as WHERE N.n BETWEEN 1 AND 500000 or unfiltered. It seems like the optimizer smartly figures out based on the ordered scan of the data from the index that the rows are already ordered by the partition.

Thanks, Mike. Much appreciated!

Thanks, Itzik, that's a very interesting observation. I've just finished downloading CTP 2.2, so I'll take a look and update the article if I find anything useful.