The Repeatable Read Isolation Level

The serializable isolation level provides complete protection from concurrency effects that can threaten data integrity and lead to incorrect query results. Using serializable isolation means that if a transaction that can be shown to produce correct results with no concurrent activity, it will continue to perform correctly when competing with any combination of concurrent transactions.

This is a very powerful guarantee, and one that probably matches the intuitive transaction isolation expectations of many T-SQL programmers (though in truth, relatively few of these will routinely use serializable isolation in production).

The SQL standard defines three additional isolation levels that offer far weaker ACID isolation guarantees than serializable, in return for potentially higher concurrency and fewer potential side-effects like blocking, deadlocking, and commit-time aborts.

Unlike serializable isolation, the other isolation levels are defined solely in terms of certain concurrency phenomena that might be observed. The next-strongest of the standard isolation levels after serializable is named repeatable read. The SQL standard specifies that transactions at this level allow a single concurrency phenomenon known as a phantom.

Just as we have previously seen important differences between the common intuitive meaning of ACID transaction properties and reality, the phantom phenomenon encompasses a wider range of behaviours than is often appreciated.

This post in the series looks at the actual guarantees provided by the repeatable read isolation level, and shows some of the phantom-related behaviours that can be encountered. To illustrate some points, we will refer to the following simple example query, where the simple task is to count the total number of rows in a table:

SELECT COUNT_BIG(*)

FROM dbo.SomeTable;

Repeatable Read

One odd thing about the repeatable read isolation level is it does not actually guarantee that reads are repeatable, at least in one commonly-understood sense. This is another example where intuitive meaning alone can be misleading. Executing the same query twice within the same repeatable read transaction can indeed return different results.

In addition to that, the SQL Server implementation of repeatable read means that a single read of a set of data might miss some rows that logically ought to be considered in the query result. While undeniably implementation-specific, this behaviour is fully in line with the definition of repeatable read contained in the SQL standard.

The last thing I want to note quickly before delving into details, is that repeatable read in SQL Server does not provide a point-in-time view of the data.

Non-repeatable Reads

The repeatable read isolation level provides a guarantee that data will not change for the life of the transaction once it has been read for the first time.

There are a couple of subtleties contained in that definition. First, it allows data to change after the transaction starts but before the data is first accessed. Second, there is no guarantee that the transaction will actually encounter all the data that logically qualifies. We will see examples of both of these shortly.

There is one other preliminary we need to get out of the way quickly, that has to do with the example query we will be using. In fairness, the semantics of this query are a little fuzzy. At the risk of sounding slightly philosophical, what does it mean to count the number of rows in the table? Should the result reflect the state of the table as it was at some particular point in time? Should this point in time be the start or end of the transaction, or something else?

This might seem a bit nit-picky, but the question is a valid one in any database that supports concurrent data reads and modifications. Executing our example query could take an arbitrarily long length of time (given a large enough table, or resource constraints for example) so concurrent changes are not only possible, they might be unavoidable.

The fundamental issue here is the potential for the concurrency phenomenon referred to as a phantom in the SQL standard. While we are counting rows in the table, another concurrent transaction might insert new rows in a place we have already checked, or change a row we have not checked yet in such a way that it moves to a place we have already looked. People often think of phantoms as rows that might magically appear when read for a second time, in a separate statement, but the effects can be much more subtle than that.

Concurrent Insert Example

This first example shows how concurrent inserts can produce a non-repeatable read and/or result in rows being skipped. Imagine that our test table initially contains five rows with the values shown below:

We now set the isolation level to repeatable read, start a transaction, and run our counting query. As you would expect, the result is five. No great mystery so far.

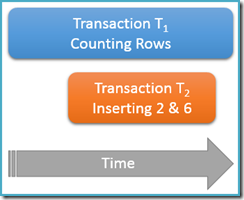

Still executing inside the same repeatable read transaction, we run the counting query again, but this time while a second concurrent transaction is inserting new rows into the same table. The diagram below shows the sequence of events, with the second transaction adding rows with values 2 and 6 (you might have noticed these values were conspicuous by their absence just above):

If our counting query were running at the serializable isolation level, it would be guaranteed to count either five or seven rows (see the previous article in this series if you need a refresher on why that is the case). How does running at the less isolated repeatable read level affect things?

Well, repeatable read isolation guarantees that the second run of the counting query will see all the previously-read rows, and they will be in the same state as before. The catch is that repeatable read isolation says nothing about how the transaction should treat the new rows (the phantoms).

Imagine that our row-counting transaction (T1) has a physical execution strategy where rows are searched in an ascending index order. This is a common case, for instance when a forward-ordered b-tree index scan is employed by the execution engine. Now, just after transaction T1 counts rows 1 and 3 in ascending order, transaction T2 might sneak in, insert new rows 2 and 6, and then commit its transaction.

Though we are primarily thinking of logical behaviours at this point, I should mention that there is nothing in the SQL Server locking implementation of repeatable read to prevent transaction T2 from doing this. Shared locks taken by transaction T1 on previously-read rows prevent those rows from being changed, but they do not prevent new rows from being inserted into the range of values tested by our counting query (unlike the key-range locks in locking serializable isolation would).

Anyway, with the two new rows committed, transaction T1 continues its ascending-order search, eventually encountering rows 4, 5, 6, and 7. Note that T1 sees new row 6 in this scenario, but not new row 2 (due to the ordered search, and its position when the insert occurred).

The outcome is that the repeatable read counting query reports that the table contains six rows (values 1, 3, 4, 5, 6 and 7). This result is inconsistent with the previous result of five rows obtained inside the same transaction. The second read counted phantom row 6 but missed phantom row 2. So much for the intuitive meaning of a repeatable read!

Concurrent Update Example

A similar situation can arise with a concurrent update instead of an insert. Imagine our test table is reset to contain the same five rows as before:

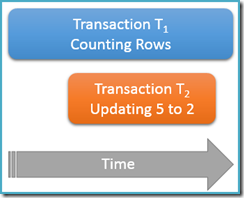

This time, we will only run our counting query once at the repeatable read isolation level, while a second concurrent transaction updates the row with value 5 to have a value of 2:

Transaction T1 again starts counting rows, (in ascending order) encountering rows 1 and 3 first. Now, transaction T2 slips in, changes the value of row 5 to 2 and commits:

I have shown the updated row in the same position as before to make the change clear, but the b-tree index we are scanning maintains the data in logical order, so the real picture is closer to this:

The point is that transaction T1 is concurrently scanning this same structure in forward order, being currently positioned just after the entry for value 3. The counting query continues scanning forward from that point, finding rows 4 and 7 (but not row 5 of course).

To summarize, the counting query saw rows 1, 3, 4, and 7 in this scenario. It reports a count of four rows – which is strange, because the table seems to have contained five rows throughout!

A second run of the counting query within the same repeatable read transaction would report five rows, for similar reasons as before. As a final note, in case you are wondering, concurrent deletions do not provide an opportunity for a phantom-based anomaly under repeatable read isolation.

Final Thoughts

The preceding examples both used ascending-order scans of an index structure to present a simple view of the sort of effects phantoms can have on a repeatable-read query. It is important to understand that these illustrations do not rely in any important way on the scan direction or the fact that a b-tree index was used. Please do not form the view that ordered scans are somehow responsible and therefore to be avoided!

The same concurrency effects can be seen with a descending-order scan of an index structure, or in a variety of other physical data access scenarios. The broad point is that phantom phenomena are specifically allowed (though not required) by the SQL standard for transactions at the repeatable read level of isolation.

Not all transactions require the complete isolation guarantee provided by serializable isolation, and not many systems could tolerate the side effects if they did. Nevertheless, it pays to have a good understanding of exactly which guarantees the various isolation levels provide.

Next Time

The next part in this series looks at the even weaker isolation guarantees offered by SQL Server's default isolation level, read committed.

1 thought on “The Repeatable Read Isolation Level”

Comments are closed.

The main issue for a db programmer is what to use and why.

The short answer is, if you expect concurrency, don't expect serializable to work for you!

It force range-locking of an index used or potentially used. this cause even simple "insert" transactions to deadlock each other if you've a 2nd index.

Repeatable read, as shown here, is good enough unless you're reading a range of records and compute a critical value based on that.

Under repeatable reads, you'd have to lock something up YOURSELF in both transactions to ensure serializability.

Typically, you compute from a range of value and update a different table. If you lock that soon-to-be-updated record before you either

read the records or insert new ones, you ensure the phantom problem goes away.

consider a typical items table and a group table, both updated on transaction, as well as a full read-update transaction

Insert items (@itme, @gid…@x…)

updates groups set count=count+@x

where gid=@gid

vs

updates groups set count=(select x from items where gid=@gid) where id=@gid

[this typically run if there is a global change or out-of-order insert, etc]

using

select * from groups with(updlock) where gid=@gid

at the entry to both transactions, they become serializable even if you're in repeatable reads.

Note that in serializable mode:

1. if you don't use with(updlock) at the start of the 1st trans, you're likely to be deadlocked when you "get to do the update" on a heavy-trans system

2. in serializable mode, the first transaction can easily deadlock with "itself" (same trans, another thread) which of course, make "serializable" useless.