Aggregates and Partitioning

The changes in the internal representation of partitioned tables between SQL Server 2005 and SQL Server 2008 resulted in improved query plans and performance in the majority of cases (especially when parallel execution is involved). Unfortunately, the same changes caused some things that worked well in SQL Server 2005 to suddenly not work so well in SQL Server 2008 and later. This post looks at a one example where the SQL Server 2005 query optimizer produced a superior execution plan compared with later versions.

Sample Table and Data

The examples in this post use the following partitioned table and data:

CREATE PARTITION FUNCTION PF (integer)

AS RANGE RIGHT

FOR VALUES

(

10000, 20000, 30000, 40000, 50000,

60000, 70000, 80000, 90000, 100000,

110000, 120000, 130000, 140000, 150000

);

CREATE PARTITION SCHEME PS

AS PARTITION PF

ALL TO ([PRIMARY]);

GO

CREATE TABLE dbo.T4

(

RowID integer IDENTITY NOT NULL,

SomeData integer NOT NULL,

CONSTRAINT PK_T4

PRIMARY KEY CLUSTERED (RowID)

ON PS (RowID)

);

INSERT dbo.T4 WITH (TABLOCKX)

(SomeData)

SELECT

ABS(CHECKSUM(NEWID()))

FROM dbo.Numbers AS N

WHERE

N.n BETWEEN 1 AND 150000;

CREATE NONCLUSTERED INDEX nc1

ON dbo.T4 (SomeData)

ON PS (RowID);

Partitioned Data Layout

Our table has a partitioned clustered index. In this case, the clustering key also serves as the partitioning key (though this is not a requirement, in general). Partitioning results in separate physical storage units (rowsets) that the query processor presents to users as a single entity.

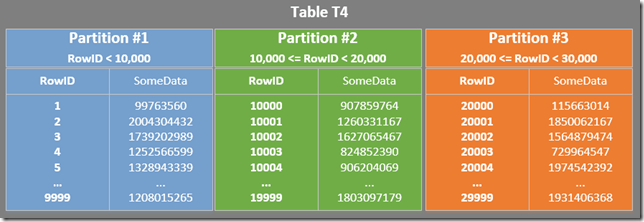

The diagram below shows the first three partitions of our table (click to enlarge):

The nonclustered index is partitioned in the same way (it is “aligned”):

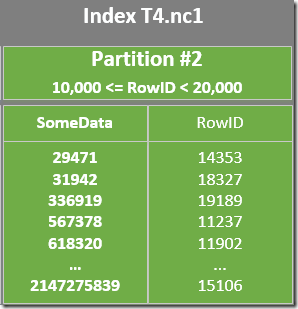

Each partition of the nonclustered index covers a range of RowID values. Within each partition, the data is ordered by SomeData (but the RowID values will not be ordered in general).

The MIN/MAX Problem

It is reasonably well-known that MIN and MAX aggregates do not optimize well on partitioned tables (unless the column being aggregated also happens to be the partitioning column). This limitation (which still exists in SQL Server 2014 CTP 1) has been written about many times over the years; my favourite coverage is in this article by Itzik Ben-Gan. To briefly illustrate the issue, consider the following query:

SELECT MIN(SomeData)

FROM dbo.T4;

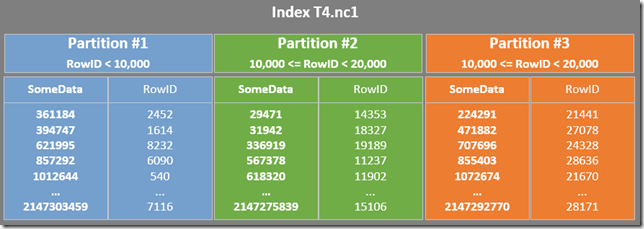

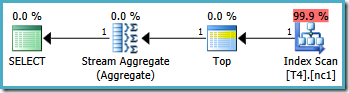

The execution plan on SQL Server 2008 or above is as follows:

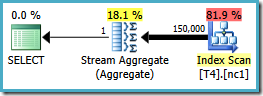

This plan reads all 150,000 rows from the index and a Stream Aggregate computes the minimum value (the execution plan is essentially the same if we request the maximum value instead). The SQL Server 2005 execution plan is slightly different (though no better):

This plan iterates over partition numbers (listed in the Constant Scan) fully scanning a partition at a time. All 150,000 rows are still eventually read and processed by the Stream Aggregate.

Look back at the partitioned table and index diagrams and think about how the query could be processed more efficiently on our data set. The nonclustered index seems a good choice to resolve the query because it contains SomeData values in an order that could be exploited when computing the aggregate.

Now, the fact that the index is partitioned does complicate matters a bit: each partition of the index is ordered by the SomeData column, but we cannot simply read the lowest value from any particular partition to get the right answer to the whole query.

Once the essential nature of the problem is understood, a human being can see that an efficient strategy would be to find the single lowest value of SomeData in each partition of the index, and then take the lowest value from the per-partition results.

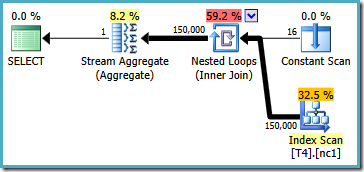

This is essentially the workaround that Itzik presents in his article; rewrite the query to compute an aggregate per-partition (using APPLY syntax) and then aggregate again over those per-partition results. Using that approach, the rewritten MIN query produces this execution plan (see Itzik’s article for the exact syntax):

This plan reads partition numbers from a system table, and retrieves the lowest value of SomeData in each partition. The final Stream Aggregate just computes the minimum over the per-partition results.

The important feature in this plan is that it reads a single row from each partition (exploiting the sort order of the index within each partition). It is much more efficient than the optimizer's plan that processed all 150,000 rows in the table.

MIN and MAX within a single partition

Now consider the following query to find the minimum value in the SomeData column, for a range of RowID values that are contained within a single partition:

SELECT MIN(SomeData)

FROM dbo.T4

WHERE RowID >= 15000

AND RowID < 18000;

We have seen that the optimizer has trouble with MIN and MAX over multiple partitions, but we would expect those limitations not to apply to a single partition query.

The single partition is the one bounded by the RowID values 10,000 and 20,000 (refer back to the partitioning function definition). The partitioning function was defined as RANGE RIGHT, so the 10,000 boundary value belongs to partition #2 and the 20,000 boundary belongs to partition #3. The range of RowID values specified by our new query is therefore contained within partition 2 alone.

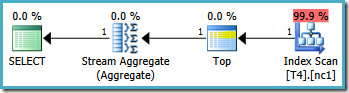

The graphical execution plans for this query looks the same on all SQL Server versions from 2005 onward:

Plan Analysis

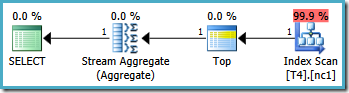

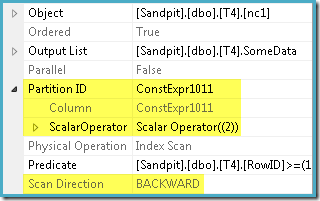

The optimizer took the RowID range specified in the WHERE clause and compared it with the partition function definition to determine that only partition 2 of the nonclustered index needed to be accessed. The SQL Server 2005 plan properties for the Index Scan shows the single-partition access clearly:

The other highlighted property is the Scan Direction. The order of the scan differs depending on whether the query is looking for the minimum or maximum SomeData value. The nonclustered index is ordered (per partition, remember) on ascending SomeData values, so the Index Scan direction is FORWARD if the query asks for the minimum value, and BACKWARD if the maximum value is needed (the screen shot above was taken from the MAX query plan).

There is also a residual Predicate on the Index Scan to check that the RowID values scanned from partition 2 match the WHERE clause predicate. The optimizer assumes that RowID values are distributed pretty randomly through the nonclustered index, so it expects to find the first row that matches the WHERE clause predicate pretty quickly. The partitioned data layout diagram shows that the RowID values are indeed quite randomly distributed in the index (which is ordered by the SomeData column remember):

The Top operator in the query plan limits the Index Scan to a single row (from either the low or high end of the index depending on the Scan Direction). Index Scans can be problematic in query plans, but the Top operator makes it an efficient option here: the scan can only ever produce one row, then it stops. The Top and ordered Index Scan combination effectively performs a seek to the highest or lowest value in the index that also matches the WHERE clause predicates. A Stream Aggregate also appears in the plan to ensure that a NULL is generated in case no rows are returned by the Index Scan. Scalar MIN and MAX aggregates are defined to return a NULL when the input is an empty set.

Overall, this is a very efficient strategy, and the plans have an estimated cost of just 0.0032921 units as a result. So far so good.

The Boundary Value Problem

This next example modifies the top end of the RowID range:

SELECT MIN(SomeData)

FROM dbo.T4

WHERE RowID >= 15000

AND RowID < 20000;

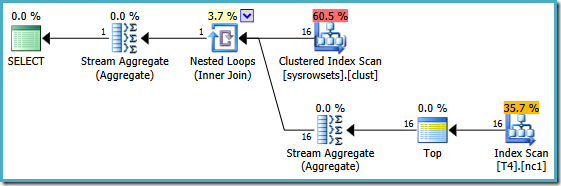

Notice that the query excludes the 20,000 value by using a “less than” operator. Recall that the 20,000 value belongs to partition 3 (not partition 2) because the partition function is defined as RANGE RIGHT. The SQL Server 2005 optimizer handles this situation correctly, producing the optimal single-partition query plan, with an estimated cost of 0.0032878:

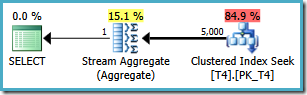

However, the same query produces a different plan on SQL Server 2008 and later (including SQL Server 2014 CTP 1):

Now we have a Clustered Index Seek (instead of the desired Index Scan and Top operator combination). All 5,000 rows that match the WHERE clause are processed through the Stream Aggregate in this new execution plan. The estimated cost of this plan is 0.0199319 units – more than six times the cost of the SQL Server 2005 plan.

Cause

The SQL Server 2008 (and later) optimizers do not quite get the internal logic right when an interval references, but excludes, a boundary value belonging to a different partition. The optimizer incorrectly thinks that multiple partitions will be accessed, and concludes that it cannot use the single-partition optimization for MIN and MAX aggregates.

Workarounds

One option is to rewrite the query using >= and <= operators so we do not reference a boundary value from another partition (even to exclude it!):

SELECT MIN(SomeData)

FROM dbo.T4

WHERE RowID >= 15000

AND RowID <= 19999;

This results in the optimal plan, touching a single partition:

Unfortunately, it is not always possible to specify correct boundary values in this way (depending on the type of the partitioning column). An example of that is with date & time types where it is best to use half-open intervals. Another objection to this workaround is more subjective: the partitioning function excludes one boundary from the range, so it seems most natural to write the query also using half-open interval syntax.

A second workaround is to specify the partition number explicitly (and retaining the half-open interval):

SELECT MIN(SomeData)

FROM dbo.T4

WHERE RowID >= 15000

AND RowID < 20000

AND $PARTITION.PF(RowID) = 2;

This produces the optimal plan, at the expensive of requiring an extra predicate and relying on the user to work out what the partition number should be.

Of course it would be better if the 2008-and-later optimizers produced the same optimal plan SQL Server 2005 did. In a perfect world, a more comprehensive solution would also address the multi-partition case, making the workaround Itzik describes unnecessary as well.