Don't get me wrong; I love filtered indexes. They create opportunities for much more efficient use of I/O, and finally allow us to implement proper ANSI-compliant unique constraints (where more than one NULL is allowed). However, they are far from perfect. I wanted to point out a few areas where filtered indexes could be improved and make them much more useful and practical to a large portion of workloads out there.

First, the good news

Filtered indexes can make very quick work of previously expensive queries, and do so using less space (and hence reduced I/O, even when scanned).

A quick example using Sales.SalesOrderDetailEnlarged (built using this script by Jonathan Kehayias (@SQLPoolBoy)). This table has 4.8MM rows, with 587 MB of data and 363 MB of indexes. There is only one nullable column, CarrierTrackingNumber, so let's play with that one. As is, the table currently has about half of these values (2.4MM) as NULL. I'm going to reduce that to about 240K to simulate a scenario where a small percentage of the rows in the table are actually eligible for an index, in order to best highlight the benefits of a filtered index. The following query affects 2.17MM rows, leaving 241,507 rows with a NULL value for CarrierTrackingNumber:

UPDATE Sales.SalesOrderDetailEnlarged

SET CarrierTrackingNumber = 'x'

WHERE CarrierTrackingNumber IS NULL

AND SalesOrderID % 10 <> 3;

Now, let's say there is a business requirement where we constantly want to review orders that have products that have yet to be assigned a tracking number (think orders that are split up and shipped separately). On the current table we would run these queries (and I've added the DBCC commands to ensure cold cache in every case):

DBCC DROPCLEANBUFFERS;

DBCC FREEPROCCACHE;

SELECT COUNT(*)

FROM Sales.SalesOrderDetailEnlarged

WHERE CarrierTrackingNumber IS NULL;

SELECT ProductID, SalesOrderID

FROM Sales.SalesOrderDetailEnlarged

WHERE CarrierTrackingNumber IS NULL;

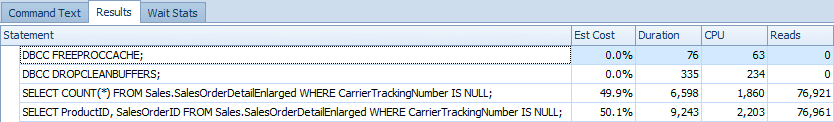

Which require clustered index scans and yield the following runtime metrics (as captured with SQL Sentry Plan Explorer):

In the "old" days (meaning since SQL Server 2005), we would have created this index (and in fact, even in SQL Server 2012, this is the index SQL Server recommends):

CREATE INDEX IX_NotVeryHelpful

ON [Sales].[SalesOrderDetailEnlarged] ([CarrierTrackingNumber])

INCLUDE ([SalesOrderID],[ProductID]);

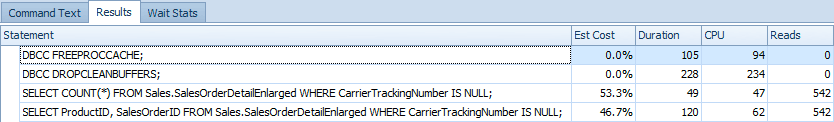

With that index in place, and running the above queries again, here are the metrics, with both queries using an index seek as you might expect:

And then dropping that index and creating a slightly different one, simply adding a WHERE clause:

CREATE INDEX IX_Filtered_CTNisNULL

ON [Sales].[SalesOrderDetailEnlarged] ([CarrierTrackingNumber])

INCLUDE ([SalesOrderID],[ProductID])

WHERE CarrierTrackingNumber IS NULL;

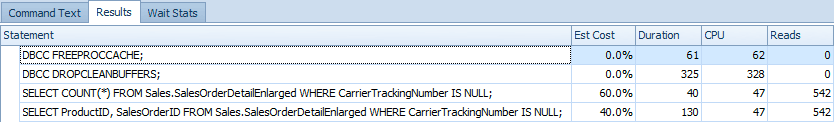

We get these results, and both queries use the filtered index for their seeks:

Here is the additional space required by each index, compared to the reduction in runtime and I/O of the above queries:

| Index | Index space | Added space | Duration | Reads |

|---|---|---|---|---|

| No dedicated index | 363 MB | 15,700ms | ~164,000 | |

| Non-filtered index | 530 MB | 167 MB (+46%) | 169ms | 1,084 |

| Filtered index | 367 MB | 4 MB (+1%) | 170ms | 1,084 |

So, as you can see, the filtered index delivers performance improvements that are almost identical to the non-filtered index (since both are able to obtain their data using the same number of reads), but at a much lower storage cost, since the filtered index only has to store and maintain the rows that match the filter predicate.

Now, let's put the table back to its original state:

UPDATE Sales.SalesOrderDetailEnlarged

SET CarrierTrackingNumber = NULL

WHERE CarrierTrackingNumber = 'x';

DROP INDEX IX_NotVeryHelpful ON Sales.SalesOrderDetailEnlarged;

DROP INDEX IX_Filtered_CTNisNULL ON Sales.SalesOrderDetailEnlarged;

Tim Chapman (@chapmandew) and Michelle Ufford (@sqlfool) have done a fantastic job outlining the performance benefits of filtered indexes in their own ways, and you should check out their posts as well:

Also, ANSI-compliant unique constraints (sort of)

I thought I'd also briefly mention ANSI-compliant unique constraints. In SQL Server 2005, we would create a unique constraint like this:

CREATE TABLE dbo.Personnel

(

EmployeeID INT PRIMARY KEY,

SSN CHAR(9) NULL,

-- ... other columns ...

CONSTRAINT UQ_SSN UNIQUE(SSN)

);

(We could also create a unique non-clustered index instead of a constraint; the underlying implementation is essentially the same.)

Now, this is no problem if SSNs are known at the time of entry:

INSERT dbo.Personnel(EmployeeID, SSN)

VALUES(1,'111111111'),(2,'111111112');

It's also fine if we have the occasional SSN that is not known at the time of entry (think a Visa applicant or perhaps even a foreign worker that doesn't have an SSN and never will):

INSERT dbo.Personnel(EmployeeID, SSN)

VALUES(3,NULL);

So far, so good. But what happens when we have a second employee with an unknown SSN?

INSERT dbo.Personnel(EmployeeID, SSN)

VALUES(4,NULL);

Result:

Violation of UNIQUE KEY constraint 'UQ_SSN'. Cannot insert duplicate key in object 'dbo.Personnel'. The duplicate key value is (<NULL>).

The statement has been terminated.

So at any one time, only one NULL value can exist in this column. Unlike most scenarios, this is one case where SQL Server treats two NULL values as equal (rather than determining that equality is simply unknown and, in turn, false). Folks have been complaining about this inconsistency for years.

If this is a requirement, we can now work around this using filtered indexes:

ALTER TABLE dbo.Personnel DROP CONSTRAINT UQ_SSN;

GO

CREATE UNIQUE INDEX UQ_SSN ON dbo.Personnel(SSN)

WHERE SSN IS NOT NULL;

Now our 4th insert works just fine, since uniqueness is only enforced on the non-NULL values. This is kind of cheating, but it does meet the basic requirements that the ANSI standard intended (even though SQL Server does not allow us to use ALTER TABLE ... ADD CONSTRAINT syntax to create a filtered unique constraint).

But, hold the phone

These are great examples of what we can do with filtered indexes, but there are a lot of things we still can't do, and several limitations and issues that come up as a result.

Statistics updates

This is one of the more important limitations IMHO. Filtered indexes don't benefit from auto-updating of stats based on a percentage change of the subset of the table that is identified by the filter predicate; it is based (like all non-filtered indexes) on churn against the whole table. This means that, depending on what percentage of the table is in the filtered index, the number of rows in the index could quadruple or halve and the statistics won't update unless you do so manually. Kimberly Tripp has given some great information about this (and Gail Shaw cites an example where it took 257,000 updates before statistics were updated for a filtered index that contained only 10,000 rows):

http://www.sqlskills.com/blogs/kimberly/filtered-indexes-and-filtered-stats-might-become-seriously-out-of-date/

http://www.sqlskills.com/blogs/kimberly/category/filtered-indexes/

Also, Kimberly's colleague, Joe Sack (@JosephSack), has filed a Connect item that suggests correcting this behavior for both filtered indexes and filtered statistics.

Filter expression limitations

There are several constructs you can't use in a filter predicate, such as NOT IN, OR and dynamic / non-deterministic predicates like WHERE col >= DATEADD(DAY, -1, GETDATE()). Also, the optimizer may not recognize a filtered index if the predicate does not exactly match the WHERE clause in the index definition. Here are a few Connect items that try to coax some support for better coverage here:

| Filtered index does not allow filters on disjunctions | (closed: by design) |

| Filtered index creation failed with NOT IN clause | (closed: by design) |

| Support for more complex WHERE clause in filtered indexes | (active) |

Other potential uses currently not possible

We currently can't create a filtered index on a persisted computed column, even if it is deterministic. We can't point a foreign key at a unique filtered index; if we want an index to support the foreign key in addition to the queries supported by the filtered index, we must create a second, redundant, non-filtered index. And here are a few other similar limitations that have either been overlooked or not considered yet:

Issues with MERGE

And MERGE makes yet another appearance on my "watch out" list:

While one of these (seemingly closely-related) bugs says that it is fixed in SQL Server 2012, you may need to contact PSS if you are hitting any variation of this issue, particularly on earlier versions (or stop using MERGE, as I have suggested before).

Tool / DMV / built-ins limitations

There are many DMVs, DBCC commands, system procedures and client tools that we start to rely on over time. However, not all of these things are updated to take advantage of new features; filtered indexes are no exception. The following Connect items point out some issues that may trip you up if you are expecting them to work with filtered indexes:

For the last three, don't hold your breath – Microsoft is quite unlikely to invest any time in the sp_ procedures, DMVs, INFORMATION_SCHEMA views, etc. Instead see Kimberly Tripp's sp_helpindex rewrites, which include information about filtered indexes along with other new features that Microsoft has left behind.

Optimizer Limitations

There are several Connect items that describe cases where filtered indexes *could* be used by the optimizer, but instead are ignored. In some cases these are not considered "bugs" but rather "gaps in functionality"…

| SQL does not use filtered index on a simple query | (closed: by design) |

| Filtered Index execution plan is not optimized | (closed: won't fix) |

| Filtered index not used and key lookup with no output | (closed: won't fix) |

| Usage of Filtered Index on BIT Column depends on exact SQL expression used in WHERE clause | (active) |

| Linked server query does not optimize properly when a filtered unique index exists | (closed: won't fix) |

| Row_Number() gives unpredictable results over Linked Servers where Filtered Indexes used | (closed: no repro) |

| Obvious filtered index not used by QP | (closed: by design) |

| Recognize unique filtered indexes as unique | (active) |

Paul White (@SQL_Kiwi) recently posted here on SQLPerformance.com a post that goes into great detail about a couple of the above optimizer limitations.

And Tim Chapman wrote a great post outlining some other limitations of filtered indexes – such as the inability to match the predicate to a local variable (fixed in 2008 R2 SP1) and the inability to specify a filtered index in an index hint.

Conclusion

Filtered indexes have great potential and I had extremely high hopes for them when they were first introduced in SQL Server 2008. However, most of the limitations that shipped with their first version still exist today, one and a half (or two, depending on your perspective) major releases later. The above seems like a pretty extensive laundry list of items that need to be addressed, but I did not mean for it to come across that way. I just want people to be aware of the vast number of potential issues they may need to consider when taking advantage of filtered indexes.

Thanks for the extensive amount of work you must have done to drag all of these articles together. I'm currently looking into filtered statistics for use on a multi-TB db and being aware of these limitations is a huge help.

Thanks for this information. Do you know whether a filtered index on a column that is a foreign key (for example, on ManagerID referencing Employee.ID) with an IS NOT NULL filter is used in assertions for UPDATEs and DELETEs on Employee? I usualy create indexes on foreign key columns of large tables to avoid table scans on these tables for every UPDATE or DELETE on the referenced tables. Quite often these columns are rather sparse, meaning most rows do not contain a reference, and using filtered indexes would save both space and I/O time.

Hi Dony-

Interesting question, and in thinking it through, I would expect that that the engine would use the filtered index. The fact that it's a foreign key isn't a factor. I did a quick test using scripts I used in an older foreign keys post (http://www.sqlperformance.com/2012/11/t-sql-queries/benefits-indexing-foreign-keys) and the filtered index was used. I would recommend testing it out in a test environment on your side, to confirm, but it should use the filtered index just fine. Hope that helps!

Erin